Lesson E: Tile-based localization

Main authors: Simon Rohou, Maël Godard

This lesson addresses a state-estimation problem arising in indoor mobile robotics. A differential-drive robot evolves on a floor covered with square tiles of known width \(L\). Unlike range-based localization, we do not rely on external landmarks. Instead, the floor itself provides sparse exteroceptive information: each time the passive support caster crosses a grout line between two tiles, a shock appears in the gyroscope data and can be detected after a simple filtering stage.

The platform considered here is a TurtleBot Burger-like robot with two motorized wheels and one passive caster. Wheel odometers provide two signals \(u[0](\cdot)\) and \(u[1](\cdot)\), from which the signed longitudinal speed can be reconstructed.

In addition, we assume that a heading sensor provides bounded measurements. The tile-crossing instants are already extracted from accelerometers data and provided in a txt file. The objective is to combine these data in order to re-estimate the robot position over time.

This problem is interesting for two reasons. First, the observations are sparse and event-based: the exteroceptive information is a list of time stamps, not a continuous signal. Second, each event is ambiguous: when a shock is detected, we do not know a priori whether the caster crossed a vertical grout line or a horizontal one, nor which line of the infinite grid was involved. Each observation therefore defines a union of feasible sets, which is a natural setting for interval analysis and contractor programming.

Formalism

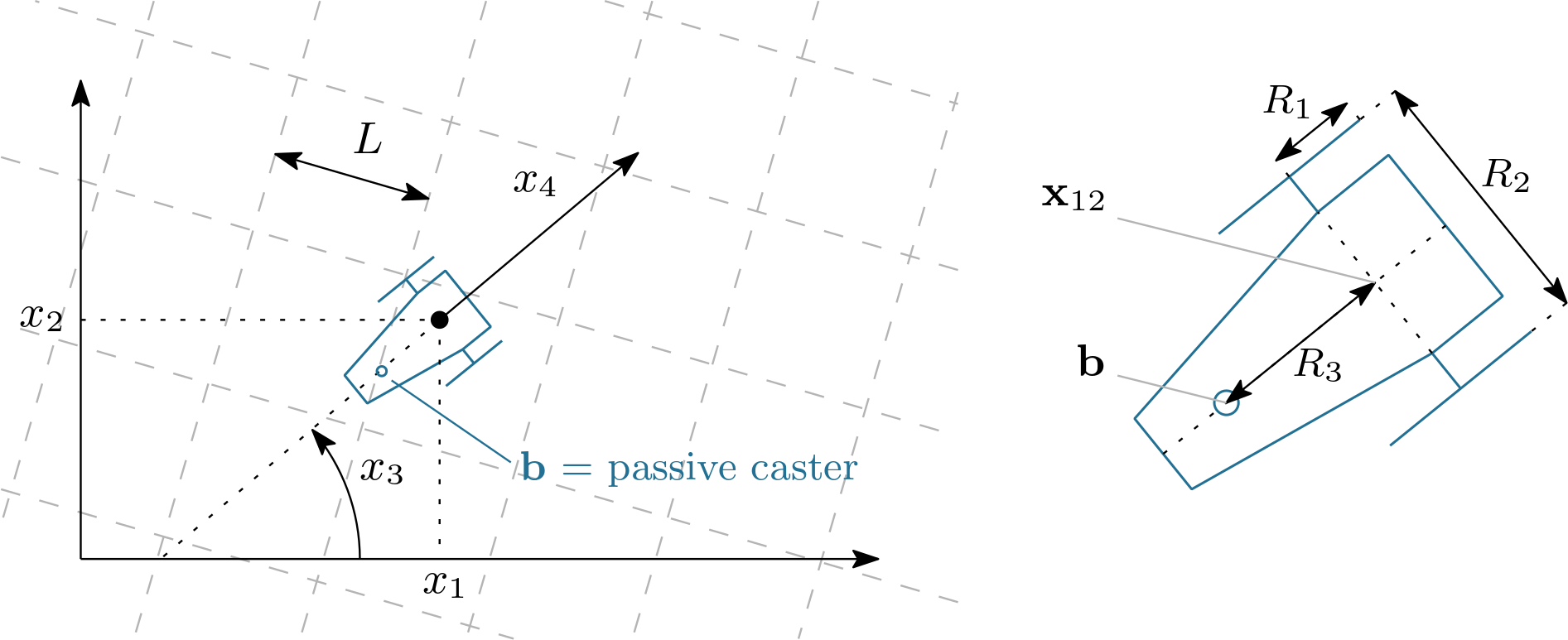

We denote by \(\mathbf{x}(t)\in\mathbb{R}^4\) a state vector in which:

\((x_1,x_2)^\intercal\) is the position of the axle midpoint in the plane;

\(x_3\) is the heading of the robot body;

\(x_4\) is the signed longitudinal speed.

The robot parameters are:

\(R_1\): radius of each motorized wheel;

\(R_2\): distance between the two motorized wheels;

\(R_3\): distance between the axle midpoint and the passive caster.

Robot notations used in this lesson. \((x_1,x_2)\) is the axle-midpoint position, \(x_3\) the heading, \(x_4\) the longitudinal speed, \(R_1\) the wheel radius, \(R_2\) the distance between the two driving wheels, and \(R_3\) the distance between axle and passive caster. When \(x_4<0\), the robot moves backward and the caster becomes the leading contact point with respect to the direction of motion.

The wheel odometers provide two wheel-angle trajectories \(u[0](\cdot)\) and \(u[1](\cdot)\). The usual differential-drive relations give

In practice, the heading is provided and these measurements will be preferred to the one obtained by integrating \(\dot x_3\), in order to avoid drift. The planar kinematics of the axle midpoint is therefore modeled by

The passive caster (support wheel)

The passive caster is located on the longitudinal axis of the robot, behind the axle in the robot frame. Its position in the world frame is thus

A useful practical remark is that the robot crosses tile joints more sharply when driving backward. In that case, the passive caster becomes the leading contact point with respect to the direction of motion, so the shock induced by a grout line is generally more pronounced and easier to detect. In this dataset, the integration is therefore performed with a reverse speed, i.e. \(x_4(t)\) is mostly negative. This does not change the geometry of the robot: the caster is still given by Eq. (3), but it becomes the first element of the robot to meet the joint along the direction of motion.

The tile floor

The floor is modeled as an infinite square tiling of width \(L\). The set of grout lines is the union of vertical lines \(x=kL\) and horizontal lines \(y=kL\), for all \(k\in\mathbb{Z}\). If \(t_i\) is one of the extracted detection times, the caster position must satisfy

where \(\varepsilon_L>0\) accounts for tile and grout imperfections, as well as small geometric mismatches between the ideal square grid model and the actual floor.

Tile-crossing events

The fact that the filtered shock is detected slightly after the physical crossing is treated separately at the temporal level: in practice, the contraction will be applied at a time slightly before \(t_i\).

A convenient implicit formulation uses the modulo operator:

This is the observation model we will encode with a contractor.

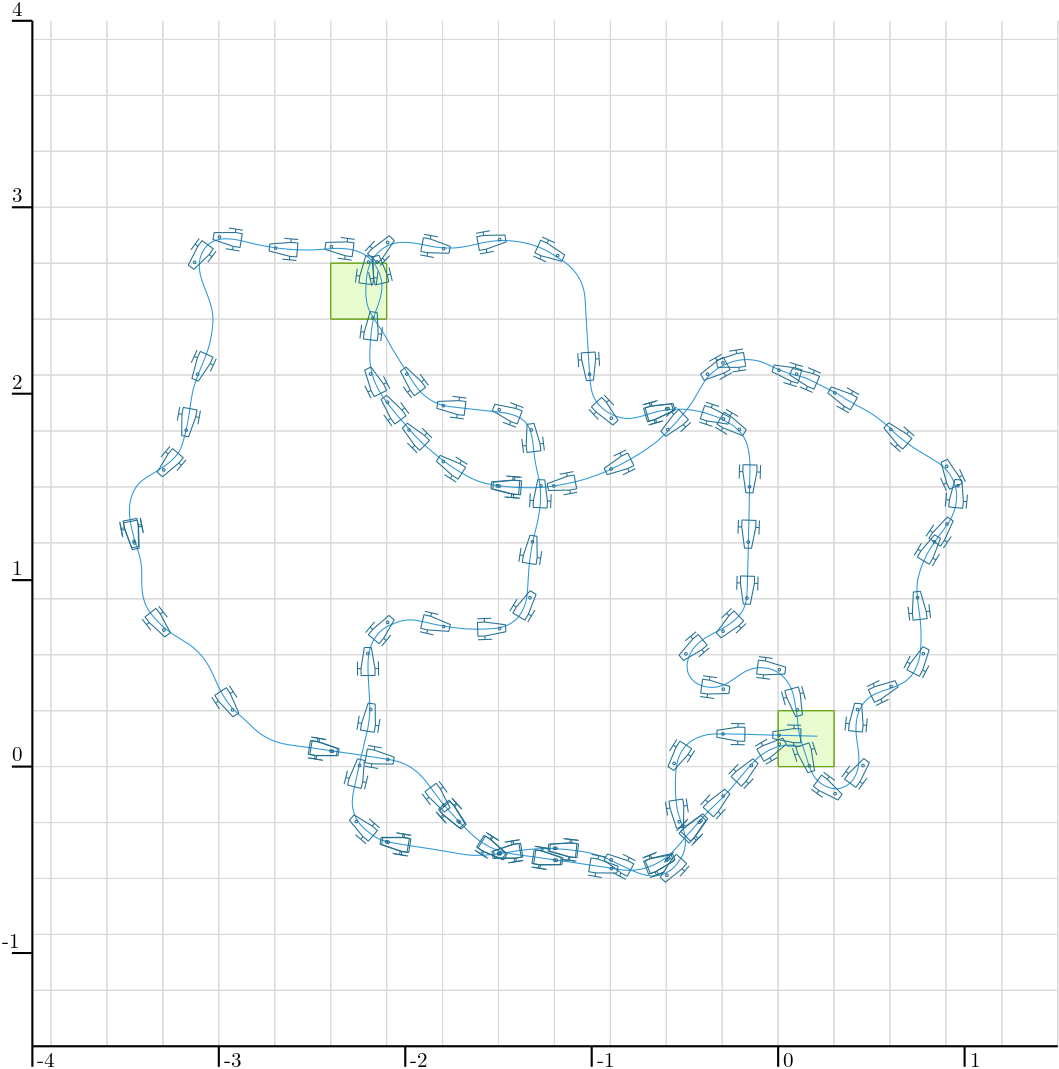

Overview of the experiment. The blue curve is the actual robot trajectory. The small markers indicate the detected tile-crossing events associated with the passive support wheel. As expected, these detections are distributed along the grout lines of the square tiling and provide sparse, event-based information that will be exploited for localization.

Provided data

The lesson uses three data files stored in the local directory ./data:

Files with extension .cdc are Codac binary data files, used to serialize and reload boxes, trajectories, and other Codac objects. See how to serialize Codac objects.

Initialization and data loading

Exercise

E.0. Update your current version of Codac. In Python, for instance:

pip install codac --upgrade --pre

# Option --pre has to be set because Codac v2 is only available in pre-release

To verify that you have the correct version, you can use:

python -m pip show codac

> Name: codac

Version: 2.0.0.dev28

The required version for this tutorial is 2.0.0.dev28 or later.

Exercise

E.1. Imports. Start with the imports below.

from codac import *

# We explicitly import `builtins` because the previous line shadows Python's `min` and `max`

import builtins as py

import math

#include <codac>

using namespace std;

using namespace codac2;

E.2. Loading sampled trajectories.

Load the binary .cdc files into sampled trajectories. We further resample the wheel odometry on the same temporal support as pos.

# Loading trajectories

pos = SampledTraj_Vector()

with open("./data/herdeudedac_tiles_pos.cdc", "rb") as f:

deserialize(f, pos)

u = SampledTraj_Vector()

with open("./data/herdeudedac_tiles_odo.cdc", "rb") as f:

deserialize(f, u)

u = u.sampled_as(pos)

// Loading trajectories

SampledTraj<Vector> pos;

{

std::ifstream in("../data/herdeudedac_tiles_pos.cdc", std::ios::binary);

deserialize(in, pos);

in.close();

}

SampledTraj<Vector> u;

{

std::ifstream in("../data/herdeudedac_tiles_odo.cdc", std::ios::binary);

deserialize(in, u);

in.close();

u = u.sampled_as(pos);

}

E.3. Reading detection times. Write a helper function that reads the detection times from the text file:

def tile_times(file):

with open(file, "r", encoding="utf-8") as f:

t = []

for token in f:

token = token.strip()

if token:

t.append(float(token))

return t

vector<double> tile_times(const std::string& file)

{

ifstream f(file);

if(!f)

throw runtime_error("Unable to open joints detections file.");

vector<double> t;

string token;

while(getline(f, token, '\n'))

if(!token.empty())

t.push_back(stod(token));

return t;

}

# Observations (times of tiles detections)

T = tile_times("./data/herdeudedac_tiles_detections.txt")

// Observations (times of tiles detections)

vector<double> T = tile_times("../data/herdeudedac_tiles_detections.txt");

E.4. Drawing the map.

Write a function draw_map(X,L) that draws the infinite grid, clipped to a bounding box X. As in the reference example, two tiles are highlighted in green.

# Map parameters

L = 0.3 # tile width

L_eps = 1e-2 # tile thickness

// Map parameters

double L = 0.3; // tile width

double L_eps = 1e-2; // tile thickness

def draw_map(X, L):

for d in range(2):

kmin = py.min(0, math.floor(X[d].lb() / L) + 1)

kmax = py.max(0, math.ceil(X[d].ub() / L) - 1)

for k in range(kmin, kmax + 1):

a = k * L

if d == 0:

DefaultFigure.draw_line(

[[a, X[1].lb()], [a, X[1].ub()]],

Color.light_gray()

)

else:

DefaultFigure.draw_line(

[[X[0].lb(), a], [X[0].ub(), a]],

Color.light_gray()

)

for v in (Vector([0, 0]), Vector([-8, 8])):

DefaultFigure.draw_box(

IntervalVector(L * v) + IntervalVector([[0, L], [0, L]]),

[Color.dark_green(), Color.green(0.1)]

)

void draw_map(const IntervalVector& X, double L)

{

for(int d = 0; d < 2; d++)

{

int kmin = std::min(0, (int)(std::floor(X[d].lb()/L))+1);

int kmax = std::max(0, (int)(std::ceil (X[d].ub()/L))-1);

for(int k = kmin; k <= kmax; k++)

{

double a = k*L;

if(d == 0)

DefaultFigure::draw_line({{a, X[1].lb()}, {a, X[1].ub()}}, Color::light_gray());

else

DefaultFigure::draw_line({{X[0].lb(), a}, {X[0].ub(), a}}, Color::light_gray());

}

}

for(const auto& v : { Vector({0,0}), Vector({-8,8})})

DefaultFigure::draw_box(

IntervalVector(L*v) + IntervalVector({{0,L},{0,L}}),

{Color::dark_green(),Color::green(0.1)});

}

E.5. The tile-map contractor. Build a contractor expressing that a 2D point lies close to either a vertical grout line or a horizontal grout line. In other words, your contractor must encode

You may introduce one analytic function for the first coordinate and one for the second one, as in the previous lessons, and then combine the corresponding contractors with a union. A union of contractors is represented by the CtcUnion class, and can be called directly using the operator |.

E.6. Verify that your tile-map contractor is correct, in a separate view. Before using the contractor in the localization pipeline, it is a good idea to test it alone. In a separate view, pave a 2D search box with your contractor and verify visually that the feasible set is a family of thin horizontal and vertical strips spaced by \(L\).

fig_ctcmap = Figure2D("Testing tile-map contractor", GraphicOutput.VIBES)

fig_ctcmap.set_axes(axis(0,[-1,1]), axis(1,[-1,1])).auto_scale()

fig_ctcmap.pave([[-1,1],[-1,1]], your_contractor, 1e-2)

Figure2D fig_ctcmap("Testing tile-map contractor", GraphicOutput::VIBES);

fig_ctcmap.set_axes(axis(0,{-1,1}), axis(1,{-1,1})).auto_scale();

fig_ctcmap.pave({{-1,1},{-1,1}}, your_contractor, 1e-2);

The grid must be centered at the origin: the grout lines are expected at \(x=kL\) and \(y=kL\), so in particular one vertical strip and one horizontal strip must pass through \((0,0)\).

This is a very useful debugging step. If the paving does not look like a grid of strips, or if the grid is shifted and does not cross the origin, the contractor is not doing what it should.

Dead reckoning from recorded data

We now reconstruct the motion information that will be used to initialize the position tube.

The recorded heading and wheel-odometry trajectories are loaded from the files in ./data.

Exercise

E.7. Reconstruct the speed and heading trajectories. Using Eq. (1), reconstruct the speed trajectory \(x_4(\cdot)\) from the two odometers, and reconstruct a heading trajectory. In the code below, the odometric heading is first computed from \(\dot x_3(t)=\frac{R_1}{R_2}(\dot u[1](t)-\dot u[0](t))\), then replaced by the directly measured heading.

Use the following robot parameters and initial condition:

# Robot parameters

R1 = 0.033

R2 = 0.16

R3 = 8e-2

x0 = Vector([L/2, L/2, 0.018, 0.0]) # initial state

traj_spd = R1 * (u[1].derivative() + u[0].derivative()) / 2

traj_hdg = (R1 * (u[1].derivative() - u[0].derivative()) / R2).primitive() + x0[2]

# Better: use the directly measured heading to avoid odometric drift.

traj_hdg = continuous_traj(pos[2])

// Robot parameters

double R1 = 0.033, R2 = 0.16, R3 = 8e-2;

Vector x0 { L/2, L/2, 0.018, 0 }; // initial state

SampledTraj traj_spd = R1*(u[1].derivative()+u[0].derivative())/2;

SampledTraj traj_hdg = (R1*(u[1].derivative()-u[0].derivative())/R2).primitive() + x0[2];

traj_hdg = continuous_traj(pos[2]); // better using the measured heading (avoiding errors due to drift)

E.8. Create the heading and speed tubes. We now enclose the measured heading and reconstructed speed by bounded tubes. The assumptions used in the reference script are:

heading uncertainty: \(\pm 2\times 10^{-3}\) rad;

speed uncertainty: \(\pm 9\times 10^{-3}\) m/s.

These bounds are encoded by inflating the corresponding sampled trajectories after creating a temporal discretization.

tdomain = create_tdomain(u.tdomain(), 5e-2) # last argument is time discretization

tube_hdg = SlicedTube(tdomain, traj_hdg)

tube_hdg.inflate(2e-3)

tube_spd = SlicedTube(tdomain, traj_spd)

tube_spd.inflate(9e-3)

auto tdomain = create_tdomain(u.tdomain(), 5e-2); // last argument is time discretization

SlicedTube tube_hdg(tdomain, traj_hdg);

tube_hdg.inflate(2e-3);

SlicedTube tube_spd(tdomain, traj_spd);

tube_spd.inflate(9e-3);

E.9. Compute the planar velocity tube. Using Eq. (2), define the analytic evolution function

and evaluate it over the heading and speed tubes.

# Computing tube of velocities

v_hdg,v_spd = ScalarVar(),ScalarVar()

f_evol = AnalyticFunction([v_hdg,v_spd], [

v_spd*cos(v_hdg),

v_spd*sin(v_hdg)

])

tube_v12 = f_evol.tube_eval(tube_hdg,tube_spd)

// Computing tube of velocities

ScalarVar v_hdg, v_spd;

AnalyticFunction f_evol({v_hdg,v_spd}, {

v_spd*cos(v_hdg),

v_spd*sin(v_hdg)

});

SlicedTube tube_v12 = f_evol.tube_eval(tube_hdg,tube_spd);

E.10. Integrate an initial position tube. Let \([\mathbf{x}_{12}](\cdot)\) denote the position tube. We initialize it from the initial position box

then we integrate the velocity tube.

# Computing initial tube of positions

ix0 = IntervalVector(x0.subvector(0,1))

ix0.inflate(L/3)

tube_x12 = tube_v12.primitive(ix0)

// Computing initial tube of positions

IntervalVector ix0(x0.subvector(0,1));

ix0.inflate(L/3);

SlicedTube tube_x12 = tube_v12.primitive(ix0);

E.11. Display the dead-reckoning enclosure. Open a figure, draw the map, and display the dead-reckoning position tube. At this stage, no tile-crossing event has been used yet.

X = IntervalVector([[-5,2],[-2,5]])

DefaultFigure.set_window_properties([50,50],[1000,1000])

DefaultFigure.set_axes(axis(0,X[0]),axis(1,X[1])).auto_scale()

draw_map(X,L)

DefaultFigure.draw_tube(tube_x12, [Color.light_gray(),Color.light_gray()])

IntervalVector X({{-5,2},{-2,5}});

DefaultFigure::set_window_properties({50,50},{1000,1000});

DefaultFigure::set_axes(axis(0,X[0]), axis(1,X[1])).auto_scale();

draw_map(X,L);

DefaultFigure::draw_tube(tube_x12, {Color::light_gray(),Color::light_gray()});

Localization from tile-crossing events

We are now ready to inject the event-based observations into the position tube. The derivative contractor will be used to propagate local contractions over time.

Exercise

E.12. Prepare the contractors. Instantiate the derivative contractor and your tile-map contractor.

ctc_deriv = CtcDeriv()

# ctc_map is already defined from E.5

CtcDeriv ctc_deriv;

// ctc_map is already defined from E.5

E.13. Contract the tile-crossing events and run a fixpoint loop. At each detected instant \(t_i\), the shock extracted from the accelerometer is not expected to coincide exactly with the ideal geometric crossing time. The mechanical contact, the vibration propagation, the filtering, and the detection threshold may introduce a small delay. For this reason, the reference implementation evaluates the constraint at a slightly earlier instant

The idea is the following: just before the detected shock, the caster should already be very close to a grout line. Starting from the position box \([\mathbf{x}_{12}](t_j)\) and the heading interval \([x_3](t_j)\), compute the corresponding caster box \([\mathbf{b}_i]\) with Eq. (3), contract this box with your tile-map contractor, then project the contraction back onto the position box \([\mathbf{x}_{12}](t_j)\) and propagate it through the whole tube with a derivative contractor inside a fixpoint loop.

Implement this step yourself. The methodology is the same as in Lessons B, C and D: express each piece of available information as a contractor, apply these contractors repeatedly in a fixpoint loop, and let the contractions propagate through the tube until no significant improvement is obtained.

E.14. Display the final contracted tube. Once the fixpoint loop has been implemented, display the contracted tube and overlay the grid.

DefaultFigure.draw_tube(tube_x12, ColorMap.blue_tube())

draw_map(X,L) # drawing again the map over the tube

DefaultFigure::draw_tube(tube_x12, ColorMap::blue_tube());

draw_map(X,L); // drawing again the map over the tube

After solving the full localization problem, it is convenient to define a helper that draws a trajectory, the robot poses corresponding to the detected tile crossings, and the implied caster positions.

Exercise

E.15. Draw the detections along a trajectory.

Define a helper draw_estim that, given a trajectory x and the detection times T, draws the trajectory, a robot icon at each detection instant, and the corresponding caster point.

def draw_estim(x, T, R3, traj_hdg, traj_col, detec_col):

DefaultFigure.draw_trajectory(x, traj_col)

for ti in T:

pi = cart_prod(x(ti).subvector(0,1), traj_hdg(ti))

bi = Vector([

pi[0] + R3*math.cos(pi[2]+PI),

pi[1] + R3*math.sin(pi[2]+PI)

])

DefaultFigure.draw_tank(pi.subvector(0,2)+[0,0,PI], 0.15, detec_col)

DefaultFigure.draw_circle(bi, 7e-3, detec_col)

draw_estim(tube_x12.mid(), T, R3, traj_hdg, Color.white(), Color.dark_blue())

auto draw_estim = [R3,&traj_hdg,&T](const SampledTraj<Vector>& x, const Color& traj_col, const Color& detec_col)

{

DefaultFigure::draw_trajectory(x, {traj_col,"--"});

for(const auto& ti : T)

{

Vector pi = cart_prod(x(ti).subvector(0,1),traj_hdg(ti));

Vector bi { pi[0]-R3*cos(pi[2]), pi[1]-R3*sin(pi[2]) };

DefaultFigure::draw_tank(pi.subvector(0,2), .15, detec_col);

DefaultFigure::draw_circle(bi, 5e-3, detec_col);

}

};

draw_estim(tube_x12.mid<Vector>(), Color::white(), Color::dark_blue());

When the estimated tube has become sufficiently thin, the support wheel markers obtained from its midpoint trajectory should appear approximately on the tile grout lines.

E.16. Verify a double passage through a box. We know from the experiment that the robot passes twice through the box \([[-2.4,-2.1],[2.4,2.7]]\). This box is already drawn (from E.4). Check visually that the contracted position tube also passes through it twice.

Conclusion

In this lesson, we used interval analysis and contractor programming to solve a localization problem based on sparse tile-crossing events. Unlike landmark-based localization, the available observations here are only time stamps, and each event is intrinsically ambiguous: it only tells us that the passive support wheel was close to either a vertical or a horizontal grout line of an infinite grid.

This example illustrates that even weak and ambiguous observations can significantly improve localization when they are modeled carefully and combined with the system dynamics. More broadly, it shows how contractor programming can exploit non-standard sensing modalities in a guaranteed set-membership framework.

A final remark is that this problem is not especially comfortable for standard Kalman filtering, because the tile-crossing observations are sparse, delayed, disjunctive, and naturally multimodal: each event only indicates proximity to either a vertical or a horizontal grout line of a periodic grid. Particle filters are in principle more suitable, since they can represent multi-hypothesis state distributions, but they may require a large number of particles to cope with the ambiguity and periodicity of the observations. By contrast, the interval-contractor approach used here is well matched to the set-valued nature of the problem: it handles ambiguity directly, preserves guarantees, and exploits the geometric structure of the tile map in a natural way.